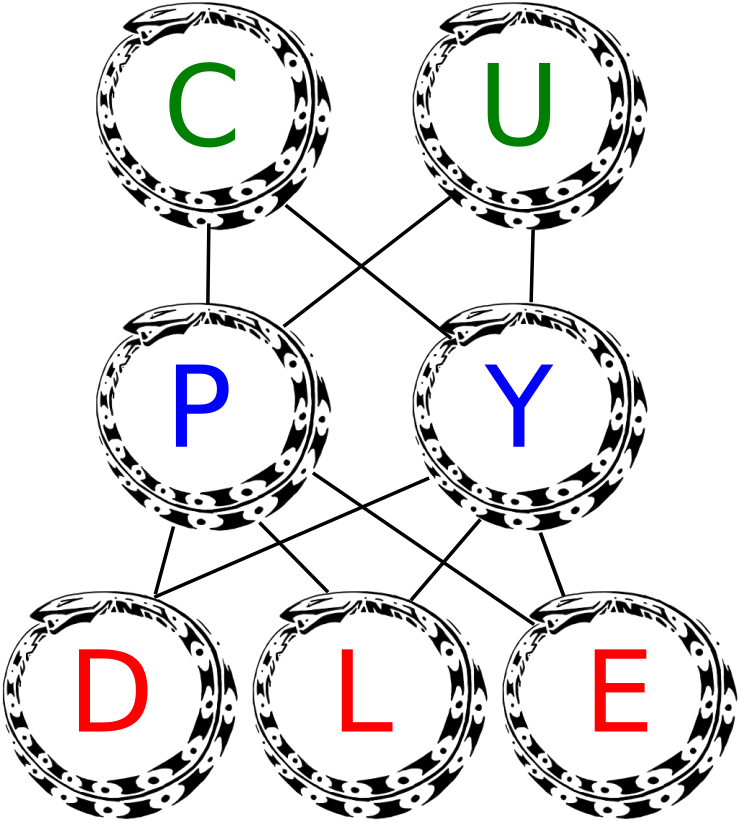

cupydle.dnn package¶

Submodules¶

cupydle.dnn.capas module¶

cupydle.dnn.dbn module¶

cupydle.dnn.funciones module¶

cupydle.dnn.graficos module¶

-

cupydle.dnn.graficos.dibujarCadenaMuestras(data, labels=None, chains=20, samples=10, gibbsSteps=1000, patchesDim=(28, 28), binary=False)[fuente][fuente]¶

-

cupydle.dnn.graficos.dibujarCostos(**kwargs)[fuente][fuente]¶ kwargs[‘axe’]=axe de matplotlib kwargs[‘mostrar’]= plot el dibujo kwargs[‘costoTRN’]= arra con el costo del entrenamiento kwargs[‘costoVAL’]= array con el costo de validacion kwargs[‘costoTST’]= array con el costo de testing kwargs[‘’]= kwargs[‘’]=

-

cupydle.dnn.graficos.dibujarFiltros(w, nombreArchivo=None, automatico=None, formaFiltro=None, binary=False, mostrar=None)[fuente][fuente]¶

-

cupydle.dnn.graficos.dibujarFnActivacionNumpy(self, axe=None, axis=[-10.0, 10.0], axline=[0.0, 0.0], mostrar=True)[fuente][fuente]¶

-

cupydle.dnn.graficos.dibujarFnActivacionTheano(self, axe=None, axis=[-10.0, 10.0], axline=[0.0, 0.0], mostrar=True)[fuente][fuente]¶

-

cupydle.dnn.graficos.dibujarGrafoTheano(graph, nombreArchivo=None)[fuente][fuente]¶ dibuja el grafo de theano (funciones, scans, nodes, etc)

-

cupydle.dnn.graficos.dibujarMatrizConfusion(cm, clases, normalizar=False, titulo='Matriz de Confusion', cmap=<matplotlib.colors.LinearSegmentedColormap object>, axe=None, mostrar=False, guardar=None)[fuente][fuente]¶ This function prints and plots the confusion matrix. Normalization can be applied by setting normalize=True.

-

cupydle.dnn.graficos.display_avalible()[fuente][fuente]¶ si se ejecuta en un servidor, retorna falso... (si no hay pantalla... ) util para el show del ploteo

-

cupydle.dnn.graficos.filtrosConstructor(images, titulo, formaFiltro, nombreArchivo=None, mostrar=False, forzar=False)[fuente][fuente]¶

-

cupydle.dnn.graficos.imagenTiles(X, img_shape, tile_shape, tile_spacing=(0, 0), scale_rows_to_unit_interval=True, output_pixel_vals=True)[fuente][fuente]¶ Transform an array with one flattened image per row, into an array in which images are reshaped and layed out like tiles on a floor.

This function is useful for visualizing datasets whose rows are images, and also columns of matrices for transforming those rows (such as the first layer of a neural net).

be 2-D ndarrays or None; :param X: a 2-D array in which every row is a flattened image.

Parámetros: - img_shape (tuple; (height, width)) – the original shape of each image

- tile_shape (tuple; (rows, cols)) – the number of images to tile (rows, cols)

- output_pixel_vals – if output should be pixel values (i.e. int8

values) or floats

Parámetros: scale_rows_to_unit_interval – if the values need to be scaled before being plotted to [0,1] or not

Devuelve: array suitable for viewing as an image. (See:Image.fromarray.) :rtype: a 2-d array with same dtype as X.

cupydle.dnn.gridSearch module¶

-

class

cupydle.dnn.gridSearch.ParameterGrid(param_grid)[fuente][fuente]¶ Clases base:

objectGrid of parameters with a discrete number of values for each. Can be used to iterate over parameter value combinations with the Python built-in function iter. Read more in the User Guide. :param param_grid: The parameter grid to explore, as a dictionary mapping estimator

parameters to sequences of allowed values. An empty dict signifies default parameters. A sequence of dicts signifies a sequence of grids to search, and is useful to avoid exploring parameter combinations that make no sense or have no effect. See the examples below.Examples

>>> from sklearn.grid_search import ParameterGrid >>> param_grid = {'a': [1, 2], 'b': [True, False]} >>> list(ParameterGrid(param_grid)) == ( ... [{'a': 1, 'b': True}, {'a': 1, 'b': False}, ... {'a': 2, 'b': True}, {'a': 2, 'b': False}]) True >>> grid = [{'kernel': ['linear']}, {'kernel': ['rbf'], 'gamma': [1, 10]}] >>> list(ParameterGrid(grid)) == [{'kernel': 'linear'}, ... {'kernel': 'rbf', 'gamma': 1}, ... {'kernel': 'rbf', 'gamma': 10}] True >>> ParameterGrid(grid)[1] == {'kernel': 'rbf', 'gamma': 1} True

Ver también

GridSearchCV- uses

ParameterGridto perform a full parallelized parameter search.

-

__getitem__(ind)[fuente][fuente]¶ Get the parameters that would be ``ind``th in iteration :param ind: The iteration index :type ind: int

Devuelve: params – Equal to list(self)[ind] Tipo del valor devuelto: dict of string to any

cupydle.dnn.loss module¶

cupydle.dnn.mlp module¶

cupydle.dnn.prueba module¶

cupydle.dnn.rbm module¶

cupydle.dnn.stops module¶

criterios de paradas para el entrenamiento..

-

class

cupydle.dnn.stops.Patience(initial, key='hits', grow_factor=1.0, grow_offset=0.0, threshold=0.0001)[fuente][fuente]¶ Clases base:

objectStop criterion inspired by Bengio’s patience method. The idea is to increase the number of iterations until stopping by a multiplicative and/or additive constant once a new best candidate is found. .. attribute:: func_or_key

function, hashable

Either a function or a hashable object. In the first case, the function will be called to get the latest loss. In the second case, the loss will be obtained from the in the corresponding field of the

infodictionary.-

grow_factor[fuente]¶ float

Everytime we find a sufficiently better candidate (determined by

threshold) we increase the patience multiplicatively bygrow_factor.

-

cupydle.dnn.unidades module¶

cupydle.dnn.utils module¶

cupydle.dnn.utils_theano module¶

-

cupydle.dnn.utils_theano.calcular_chunk(memoriaDatos, tamMiniBatch, cantidadEjemplos, porcentajeUtil=0.9)[fuente][fuente]¶ calcula el tamMacroBatch cuantos ejemplos deben enviarse a la gpu por pedazos.

-

cupydle.dnn.utils_theano.calcular_memoria_requerida(cantidad_ejemplos, cantidad_valores, tamMiniBatch)[fuente][fuente]¶ calcula la memoria necesaria para la reserva de memoria y chuncks segun el dataset y minibacth

-

cupydle.dnn.utils_theano.gpu_info(conversion='Gb')[fuente][fuente]¶ Retorna la informacion relevante de la GPU (memoria) Valores REALES, en base 1024 y no 1000 como lo expresa el fabricante

Parámetros: conversion (str) – resultado devuelto en Mb o Gb (default)

Function that loads the dataset into shared variables

The reason we store our dataset in shared variables is to allow Theano to copy it into the GPU memory (when code is run on GPU). Since copying data into the GPU is slow, copying a minibatch everytime is needed (the default behaviour if the data is not in a shared variable) would lead to a large decrease in performance.